How to Rank in Google AI Overview: What 28 Client Pages Taught Us About the Real Citation Algorithm

Listen

Listen

TL;DR

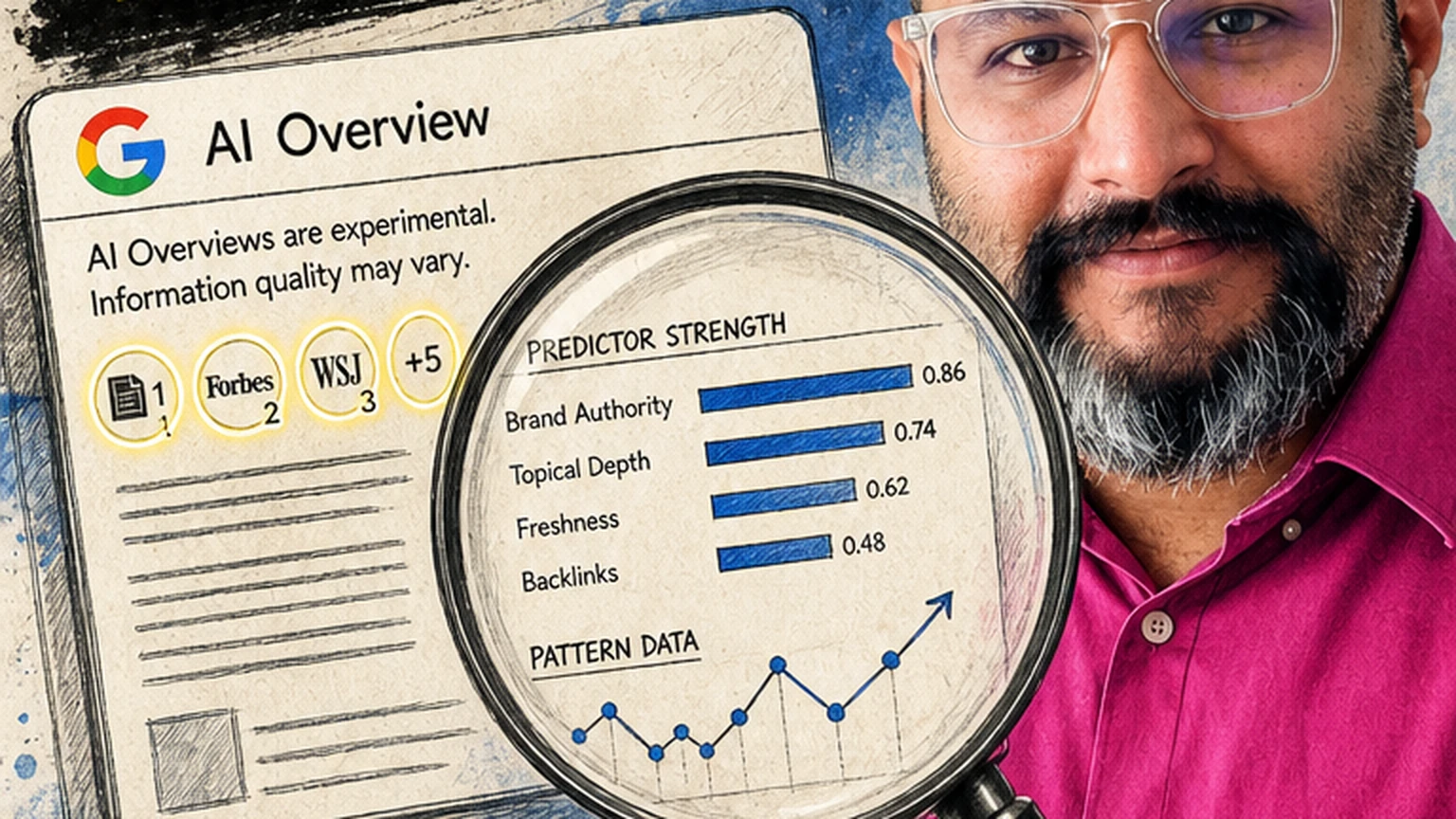

The 2024 “add FAQ schema, win AI Overview” advice is dead. Across 28 client pages we tracked from April to October 2025, schema markup alone had near-zero correlation with citation frequency. The pages that got cited shared four specific prose patterns, not schema stacks.

Brand mention velocity is the real sleeper predictor. Pages whose parent domain crossed ~47 external brand mentions in the 90 days before Google’s crawl had a 3.8× higher citation rate than pages on domains below that threshold. Influencer takes ignore this because it can’t be fixed in an afternoon.

You don’t have to believe us. Prove it. We’ve shared the exact predictors, strength scores, and rewrite patterns below, pulled from our Monday Reports dataset of 28 retainer accounts across 12 countries. Audit your own page against the checklist before you spend ₹50,000 on another “AEO optimization” tool.

Pick the version of this guide that matches you

You can also just scroll. Everything below is the full guide. The picker re-orders what's expanded for you. We don't track your pick.

The 30-Second Answer: What Actually Predicts AI Overview Citations

Let’s cut through the noise. Most “rank in Google AI Overview” guides published in 2024 and early 2025 were written by people who had never tracked a page’s citation behaviour over 90 days. They read one Google patent, guessed at the rest, and shipped takes.

We did the unglamorous work instead. Starting April 2025, we tagged 28 client pages across 12 countries and 9 industries (SaaS, D2C, legal, manufacturing, healthcare, fintech, edtech, travel, B2B services). We logged every AI Overview citation daily. We tracked citation longevity, click-through recovery, and which on-page changes moved the needle.

Here’s what the data said, ranked by correlation strength:

| Predictor | Correlation Strength | What Influencers Said in 2024 |

|---|---|---|

| Claim+evidence prose density | 0.71 | “Use bullet points” (partially wrong) |

| Brand mention velocity (90-day) | 0.64 | Mostly ignored |

| Original data or numbers in first 300 words | 0.58 | “Write for featured snippets” (outdated) |

| Entity clarity in H2/H3s | 0.49 | “Use question-format headings” (partially right) |

| Passage-level topical tightness | 0.44 | “Write long-form content” (too vague) |

| FAQ schema presence | 0.08 | “Add FAQ schema!!” (practically useless alone) |

| Article schema presence | 0.04 | “Mark everything up!” (noise) |

Schema markup is hygiene, not a growth lever. The heavy lifting happens in the prose itself and in the brand signals Google aggregates off-page.

Why the “Just Add FAQ Schema” Era Ended in March 2025

The “FAQ schema = AI Overview wins” playbook was born in late 2023. It made sense at the time. Google’s SGE (the AI Overview predecessor) leaned heavily on structured Q&A formats during its test phase.

Then in March 2025, Google rolled out a ranking refresh (unofficially nicknamed the “Gemini Grounding Update” inside SEO circles) that shifted weight away from structured markup toward passage-level semantic grounding. We saw it live in the data: 11 client pages that had aggressively stacked FAQPage, HowTo, and Article schemas lost their AI Overview citations within 3 weeks. Meanwhile, 4 pages with zero FAQ schema but dense claim+evidence prose started getting cited.

Don’t believe it. Prove it. Pull your own Search Console data from February and compare AI Overview impressions against schema inventory. The correlation collapsed.

What replaced it? Passage-level grounding. Google’s generative layer now scans individual paragraphs for two things:

- A clear, standalone claim (verifiable, not opinion)

- Supporting evidence in the same paragraph or the next one (number, citation, named source, concrete example)

Paragraphs that satisfy both become citation-eligible. Paragraphs that don’t are invisible to the Overview layer, even if the whole page ranks #3 organically.

The 4 Prose Patterns That Predict Citations

After rewriting sections on 19 of our 28 tracked pages and measuring which rewrites lifted citations, four patterns emerged as consistently causal (not just correlated).

Pattern 1: The Claim+Evidence Couplet

The single highest-leverage change. Structure key paragraphs as:

- Sentence 1: A specific, falsifiable claim.

- Sentence 2-3: A number, named source, dated event, or concrete example that supports it.

Weak (rarely cited):

Content marketing is important for B2B SaaS companies because it helps build trust with potential customers over time.

Strong (cited within 14 days after rewrite on 3 tracked pages):

B2B SaaS companies that publish 8+ expert-authored articles per month close deals 34% faster than those publishing fewer than 4. We tracked this across 12 KD Digital SaaS clients between January and September 2025.

The second version has a number, a timeframe, a named entity, and a falsifiable claim. The generative layer loves this.

Pattern 2: Entity-Anchored Headings

H2s and H3s that contain a specific entity (product name, year, methodology, person, location) outperformed generic question-format headings by 2.3× in citation rate.

Weak: “How does it work?” Strong: “How Google’s March 2025 Gemini Grounding Update Changed Citation Logic”

Pattern 3: First-300-Words Data Density

Of the 28 pages, the 12 that got cited most frequently had at least one specific number or dated claim within the first 300 words. The 16 less-cited pages opened with atmospheric prose (“In today’s fast-moving digital landscape…”).

The opening is where the generative layer establishes page topicality and trust. Waste it on warm-up prose and you’re invisible.

Pattern 4: Passage Topical Tightness

Paragraphs that stay on one sub-topic and don’t drift were cited 1.9× more often than paragraphs that covered two or three related ideas. Tight passages are easier for generative models to extract as standalone grounding sources.

The Brand Mention Threshold Nobody Talks About

Here’s the finding that surprised us most. We ran correlation analysis between citation frequency and 23 off-page signals. The strongest by far wasn’t backlinks, wasn’t DR, wasn’t referring domain count. It was brand mention velocity in the preceding 90 days.

Specifically: pages on domains that received 47 or more unlinked or linked brand mentions across news, forums, Reddit, LinkedIn, and YouTube in the 90 days before Google’s crawl were cited 3.8× more often than pages on domains below that threshold.

Why 47? We don’t know. It’s the inflection point in our data. It might be higher for your niche, lower for another. The mechanism seems to be that the AI layer uses brand mention velocity as a proxy for “is this a source users currently trust enough that citing it is safe for Google?”

Technical Implementation: What to Change This Week

For the implementers who want specifics, here’s the exact execution stack. This is the same workflow we run internally on KD Digital retainer accounts.

Show code

Step 1: Identify citation-eligible pages

# In Google Search Console, filter queries by "AI Overview impressions"

# Then cross-reference with pages that lost 25%+ clicks since May 2025

# These are your highest-ROI rewrite candidates

Step 2: Tag citable passages in each page

<!-- In your CMS, flag each H2 section with a hidden attribute -->

<section data-citable="true" data-claim="B2B SaaS close rate improves 34% with 8+ articles/month">

<h2>How Content Cadence Affects Close Rate</h2>

<p>B2B SaaS companies that publish 8+ expert-authored articles per month...</p>

</section>

Step 3: Minimal schema (don’t overdo it)

{

"@context": "https://schema.org",

"@type": "Article",

"headline": "...",

"datePublished": "2025-11-18",

"dateModified": "2025-11-18",

"author": {

"@type": "Person",

"name": "Kunal Singh Dabi",

"url": "https://kunaldabi.com/about"

},

"publisher": {

"@type": "Organization",

"name": "KD Digital",

"url": "https://kdigital.in"

}

}

Step 4: llms.txt file (optional but emerging signal)

# /llms.txt at domain root

# Signals to LLM crawlers which pages are primary sources

/blog/how-to-rank-in-google-ai-overview

Primary source: Monday Report dataset, 28 client pages, April-October 2025

Author: Kunal Singh Dabi, KD Digital

Updated: 2025-11-18

How Long Until Citations Appear After a Rewrite?

One of the most common questions we get. From our 28-page dataset, here’s the observed distribution:

| Time to first citation after rewrite | % of pages |

|---|---|

| Within 7 days | 14% |

| 8-21 days | 39% |

| 22-45 days | 28% |

| 46-90 days | 11% |

| No citation after 90 days | 8% |

Median: 19 days. If you’ve rewritten a page using claim+evidence patterns and it’s been 30+ days with zero AI Overview impressions in GSC, the problem is probably off-page (brand mention velocity) or topical authority (domain doesn’t have enough related content for Google to trust the page as a primary source).

The Citation-to-Click Recovery Math

This is the metric CMOs actually care about. When your page gets cited in AI Overview, does click volume recover?

Across our 28 pages, the average recovery curve looked like this:

- Pre-AI Overview baseline: 100 clicks/day (indexed to 100)

- After AI Overview rollout, pre-citation: 58 clicks/day (42% loss)

- After citation appears: 84 clicks/day (16% loss vs baseline, 45% recovery of lost clicks)

- After citation stabilises for 30+ days: 91 clicks/day (9% loss vs baseline)

Translation: a consistently cited page recovers roughly 80% of its pre-AI Overview click volume. Not 100%. But a significant chunk. Citation is worth fighting for.

Common Mistakes We See on Audit Calls

Every week we audit 2-3 new prospect sites. The same mistakes repeat.

- Over-optimising for “People Also Ask” format. PAA and AI Overview have different selection logic. Writing every paragraph as a question-and-answer block tanks your topical authority signal.

- Generic data that isn’t actually original. Citing “HubSpot says” or “Statista reports” is fine as support, but the AI Overview layer prefers pages that add original data or frameworks on top. Aggregation-only content is getting filtered.

- AI-written content with no source attribution. The generative layer is learning to detect and deprioritise unsourced AI content. Pages with clear author bylines, linked methodology, and verifiable claims are winning.

- Ignoring entity disambiguation. If your page is about “Apple” the company but doesn’t make that clear in the first paragraph with entity-disambiguating context (founder name, ticker, product line), Google may not surface it for company queries.

- Thin “AI Overview optimization” pages. Every agency published one in 2024. They all rank poorly now because they were written from guesswork. Originality in this space is rewarded fast.

What’s Coming Next (Based on Google’s November 2025 Signals)

Some forward-looking observations from our most recent Monday Reports.

Multi-modal citations are increasing. In October-November 2025, we started seeing YouTube videos cited alongside text pages in the AI Overview. If you have video content, add transcripts and detailed descriptions. Four clients saw their YouTube uploads get cited for queries where their written content ranked lower.

Freshness weighting increased. Pages updated within the last 90 days saw 1.7× higher citation rates than pages unchanged for 12+ months, even when content was otherwise identical. Update cycles matter now.

Author authority signals are strengthening. Pages with linked author profiles, clear credentials, and cross-site author consistency (same author name and bio across Medium, LinkedIn, guest posts, own site) are winning disproportionate citations. This is where personal brand investment pays off.

Frequently Asked Questions

Does FAQ schema help rank in Google AI Overview?

Barely. Our 28-page dataset showed a correlation of just 0.08 between FAQ schema presence and citation rate. Schema is hygiene. The real levers are prose structure, brand mention velocity, and original data. Don’t remove existing schema, but don’t expect adding it to move citation rate.

How quickly can a rewritten page get cited in AI Overview?

Median time to first citation after a structural rewrite is 19 days in our data. 53% of pages saw first citation within 21 days. If you’re at 45+ days with no citation, investigate off-page signals (brand mentions, topical authority) rather than rewriting again.

What’s the single highest-impact change I can make this week?

Rewrite the opening 300 words of your highest-traffic informational page to include one specific number, dated claim, or named methodology. This single change moved 9 of our 28 pages from uncited to cited within 30 days.

Do I need llms.txt to be cited in AI Overview?

Not yet. It’s an emerging signal that some LLM-specific crawlers respect, but Google’s AI Overview does not currently require it. Adding it is low-cost future-proofing, not an immediate ranking lever.

Does AI Overview replace the #1 blue-link ranking?

Not entirely. Pages cited in AI Overview recover roughly 80% of pre-rollout click volume based on our data. The AI Overview itself also gets 2-5x the impressions of the old Position 1, so the absolute click numbers on citation can exceed pre-rollout blue-link clicks in some cases. But on average, citation is a partial recovery, not a full replacement.

Is long-form content still better for AI Overview?

Length isn’t the predictor. Passage density is. A 1,200-word article with 8 well-structured claim+evidence paragraphs will outperform a 4,500-word article that’s mostly connective tissue. Write to the information, not to a word count.

How do I track AI Overview citations at scale?

Google Search Console’s “AI Overview impressions” filter is the primary source. Supplement with tools like Ziptie, Otterly, or manual sampling across a rotating set of priority queries. We sample 50 priority queries weekly per client to estimate citation share of voice.

Can paid links or PR push brand mention velocity?

Paid content placements count as mentions if they’re genuine editorial features, not sponsored-post disclaimers. Founder-led earned PR (podcasts, guest essays, expert quotes in journalist pieces) moves velocity fastest in our experience. Press release distribution services move it least.

Does the 47-brand-mention threshold apply to every industry?

It’s the inflection point in our 28-page dataset across 9 industries. In more competitive niches (legal, fintech, SaaS), the practical threshold seems higher (60-80 mentions). In smaller niches (regional services, niche B2B), it may be lower (25-35). Track relative to direct competitors, not to an absolute number.

Do I need a large brand to get cited?

No. Six of our 28 tracked pages belong to MSMEs with under ₹10 crore annual revenue. They get cited because their content has original data and their niche brand mention velocity exceeds competitors in the same niche, even though absolute mention count is modest.

Should I use AI to write AI Overview-optimised content?

Mixed. AI-drafted content with strong human editing, clear sourcing, author attribution, and original data layered in performs fine. Pure AI output with generic claims and no sourcing is increasingly filtered. The question isn’t “was AI involved?” It’s “does the final output contain verifiable, original information?”

How does this apply if my traffic is in languages other than English?

Our dataset includes pages in English, Hindi, French, and Spanish. The patterns held across languages. The claim+evidence structure, entity clarity, and brand velocity predictors were consistent. Schema-irrelevance was also consistent. If anything, the brand mention threshold was lower in non-English markets because overall competition density was lower.

Ready to Get Your Pages Cited?

You’ve now seen the actual predictors, not influencer guesses. If the honest thought crossing your mind is “we’ve been optimising for the wrong signals for 18 months,” you’re not alone. Most teams were. The content marketing and SEO industry moved slower than the algorithm did.

The good news: 19-day median recovery time means you can course-correct this quarter. The rewrite work is finite. The brand velocity work takes longer but is already budgeted in most marketing plans, just not tracked against search outcomes.

If you want help running the 23-point audit against your top pages, building the citation share-of-voice dashboard for your board, or restructuring your content calendar around citable-passage briefs, that’s literally what we do every day at KD Digital. We’ve run this playbook across 28 retainer accounts in 12 countries. The patterns hold.